The AI Methodology Gap: Why Bottom-Up Has a Ceiling

Engineer-driven AI adoption solves a thousand local problems. The ones that actually move the needle — specs, contracts, sign-off — aren't local.

Written with Claude Opus.

My last post ended with a question it didn’t quite answer. If Engineer A’s AI review and Engineer B’s AI review produce different verdicts on functionally equivalent CDC crossings, and a new model release can flip which of them was right, the obvious follow-up is: whose job is it to make sure the company’s CDC sign-off doesn’t float on whichever model happened to be open the afternoon of the review?

The CDC tools already catch the structural violation. That’s not the issue - a modern CDC tool flags the OR-before-sync the moment the RTL compiles, and has for years. The divergence shows up one step downstream, at the waive-or-fix decision. Whether a flagged crossing gets waived as a benign same-domain glitch or fixed as a real violation has always been a judgment call, and AI is now embedded in that judgment step without any company-level decision about whose judgment governs. The next generation of AI-aware sign-off tools may well pull that judgment step back into the flow - that is exactly what a tool-enforced layer would look like - but whether they do or don’t, the decision about which layer is canonical for a given company is not a decision the tool makes. It is a decision the company makes, and most companies deploying AI into chip design have not made it yet.

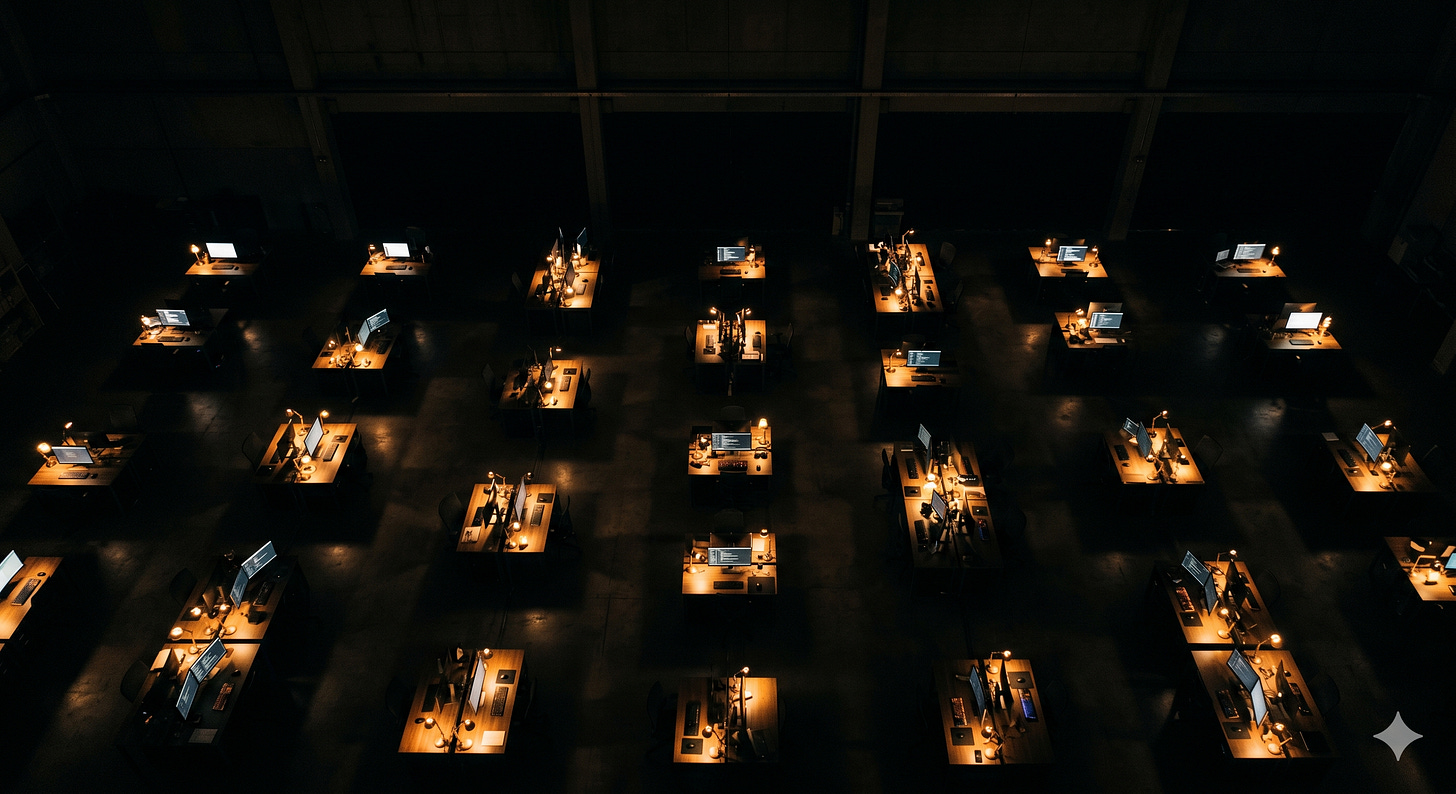

The version of AI adoption most companies are actually running looks like this: encourage engineers to experiment, share what works, let the good stuff percolate up. It produces real wins. It also has a ceiling - a structural one, not a motivational one - and the problems above the ceiling are the ones that matter most for silicon. This post is about where the ceiling is and why it’s where it is.

Point solutions are genuine wins

Let me start by being clear about what’s working. An engineer who writes a Python-plus-AI script to parse a timing report and flag outliers has solved their own friction point on their own schedule. They did not file a ticket with the CAD team and wait six months. They did not negotiate with a vendor. They opened Claude or Cursor, described the problem, iterated for an afternoon, and moved on. That is real productivity. Multiply it across a few hundred engineers and a few hundred little friction points, and the aggregate is substantial.

The same pattern applies to block-level assertion drafting, to ad-hoc log analysis, to writing small tools that bridge between incompatible formats, to parsing design-review minutes into trackable items. These are exactly the problems an engineer can see clearly because they live inside them. They’re also exactly the problems where the engineer has the context to verify the output - they know what a correct timing-report analysis looks like, they know what a sane block-level assertion reads like, and they can spot when the AI has produced something that sounds right but isn’t.

This is AI for the engineer, in the sense Post 1 used the phrase. It’s liberating, it’s fast, and no one above them needs to sign off on it for it to work. Companies should encourage it. The mistake is in what comes next.

What point solutions can’t reach

The problems that actually define a company’s silicon capability - the ones that show up in tape-out outcomes, integration schedules, and product competitiveness - are not block-local. They are cross-block, cross-team, or cross-release, and they cannot be built by any engineer from their desk.

Consider spec-to-design. Making it work requires that every block’s spec use a consistent structure, that the RTL coding standards match what the model is trained or prompted to produce, that the verification plan references the spec in a format the automation can check, and that the sign-off criteria treat the spec as the authoritative source. No individual engineer can deliver that. They don’t have authority over the spec format, they don’t write the coding standards, they don’t set the verification-plan template, and they certainly don’t modify the sign-off flow. The most a well-intentioned engineer can produce is a spec-to-design script that works for their own block and breaks the moment it meets a neighboring block’s conventions. The bottom-up version gives you five incompatible prototypes, none of which survives integration.

Design by Contract is the same shape. Contracts work when every block has them in a consistent schema, when integration tests consume the schema, when sign-off references it. Any single engineer writing contracts for their own block is doing useful work, but the methodology only pays off when the entire SoC speaks the same contract language, and no engineer can make that happen from their desk.

CDC sign-off under AI, which Post 5 walked through in detail, is the same kind of problem arriving from a different angle. The question isn’t whether any particular engineer can use AI well on their own crossings. The question is which analysis the company’s sign-off rests on. If the answer is “whichever one each engineer chose,” then the variance the CDC tool was invented to eliminate has been reintroduced above the tool. Someone has to decide, with authority that extends across every block in the SoC, which AI layer is canonical. That decision is not an engineer’s to make.

Sign-off is a named-owner process

Chip design has a vocabulary for this kind of problem, and it’s worth using it. CDC sign-off, timing sign-off, power sign-off, DFT sign-off - each of these is an auditable process with a named owner, a documented flow, an audit trail, and a filed report. When a failure shows up in silicon, the trail leads back to a specific person who signed a specific document on a specific date. That isn’t bureaucracy. It’s the mechanism by which the enterprise guarantees that rigor was applied and that someone is accountable for the verdict.

The AI layer inside any of these flows has to inherit that structure or it is not sign-off. It is an opinion. Specifically, if the methodology permits Engineer A’s assistant to say “fix this” and Engineer B’s assistant to say “fine under the level protocol” for functionally equivalent crossings in the same SoC, the integration engineer is no longer reviewing the design - they are adjudicating between model outputs. When the failure shows up in silicon, the person who signed the report is left holding the candle for a decision made by whichever model was hosted at their desk the week of the review.

What the process requires instead is what Post 5 closed on: a shared tool-enforced layer, uniform across the team, grounded in the same structural analysis, auditable in the same way the rest of sign-off is. Disagreements resolve in the graph rather than in model temperature or training cutoff. This is not an optional refinement. It is the minimum condition under which “AI in CDC review” describes a sign-off flow rather than a collection of individually persuaded reviewers.

And the thing to notice is that a tool-enforced layer like that is categorically not something an engineer builds from their desk. It requires tool choice, corpus curation, agent orchestration, model-version pinning, audit instrumentation, and the authority to tell every engineer in the group to use this and not that. It is methodology work, and methodology has always been an org function.

ChipNeMo was a Jensen-level decision

The clearest industry example of the kind of AI work that can only happen with real organizational mandate is ChipNeMo. Twenty-three billion tokens of proprietary internal data, thirty years of institutional design history, infrastructure investment sized for deployment to eleven thousand engineers. That is not a staff engineer’s 20% project. It is not an initiative a good team pushed up from the bottom. It is a multi-year commitment that required a CEO-level decision that AI for chip design was strategically central to the company, and the decision was made by someone with the authority to say yes.

Most companies will never build their own ChipNeMo, for reasons Post 1 went into - the corpus isn’t there, and the handful of companies with the corpus are already the ones doing it. But the analogy is what matters for everyone else. The company-scale AI decisions - which commercial models are canonical, which EDA integrations are supported, which agents are sanctioned for which tasks, which flows are in scope and which are off-limits, who owns the methodology and who the engineers file against - are leadership-level decisions. They don’t get made at the engineer level because they can’t be made at the engineer level. The engineer level can’t enforce them.

When a company leaves the company-scale decisions unmade and tells the engineers to experiment, what it gets is a very energetic floor of point solutions and a ceiling it never breaks through. The floor is a real asset. The ceiling is the problem.

The cost-cutting misread

One last trap worth naming, because it’s running in multiple companies right now and it is orthogonal to everything above. The misread is this: AI makes engineers more productive, therefore you can run the same design with fewer or cheaper engineers, therefore senior headcount is a cost-cutting target. It is a misunderstanding of what AI actually replaces.

AI replaces the activity of generating code - the typing, the boilerplate, the repetitive structural work. It does not replace the judgment that decides whether the generated code is correct, whether the spec it was generated from describes the right design, whether the verification plan actually exercises the risky paths, whether the sign-off report tells the truth about what was checked. The judgment layer is not an adjunct to the activity. It is the load-bearing piece. When you remove the activity, you do not reduce the need for judgment - you increase it, because the activity used to filter for competence along the way, and now it does not.

A company that cuts senior engineers because AI made juniors “as productive” has possibly increased its tape-out throughput of written code. It has also reduced its capacity to tell whether the code is working. That cost does not show up in Q1 headcount metrics. It shows up at bring-up, where the engineers who would have spotted the problem are either not in the room, or are but have been moved into purely reviewing roles without the technical runway to pattern-match what they’re looking at. It is a cost that accrues silently and presents all at once.

It also raises a separate question I want to come back to in the next post: if AI is now doing the activity that used to teach junior engineers the judgment they grow into, where do the senior engineers of five and ten years from now come from? That is its own problem, and it deserves its own piece.

Marco Brambilla is a semiconductor industry veteran with 25 years in chip design, most recently as Senior Technical Director at Meta Reality Labs. He writes about AI, chip design, and the future of hardware engineering at Above the RTL.